I tested it and the process of creating the chains is now about 300 times faster

-

MIDI into [seq] and Markov chains

-

@ingox Great work, thanks for sharing. Great idea to include the state saving mechanism, in fact that subpatch can be taken as a nice starting point for state saving that requires a specific order of execution. As far as I'm concerned, of course you or anyone else can do whatever they want with the code I posted, which in turn was taken from somewhere else as you mentioned.

Out of curiosity, you say it's 300 times faster compared to which patch? Sounds like an amazing improvement.

-

@weightless This was stupid. I didn't specify the order. My patch is actually a bit slower...

-

@ingox Oh I see, well at this point it's not likely to get much simpler than this I reckon, and it's probably fast enough even with very large inputs.

-

@ingox @weightless I would agree to release the abstraction into the public domain (if I am in the position to do that). And I do not mind if the license is GPL or BDS ...

-

A slight improvement in speed: This version uses [array] to store the indices. To create the chains, the array is combined with the end of the array and chains are taken directly from this combined array. The downside is that the structure of the source material that is in the indices cannot be looked at like it was with [text], but for practical purposes, this should not be an issue. Also some other slight improvements.

This is now in the public domain. Thanks again for the great collaboration!

Edit:

Some more slight improvements. Also i added the clipping of the order again, as i think it contributes positively to the user experience. It is explained in the help file and there is a message when the chains are being created, so it should be reasonable.

-

This looks awesome. I don't get how the lists get encoded as one item (for chords). Can anyone please enligthen me?

-

@porres The lists are stored in a text, the markov chains are simple build upon the line numbers of the text, so over the indices.

-

yeah, now that I had a deeper look into it, I get it

nice way to make multidimensional arrays

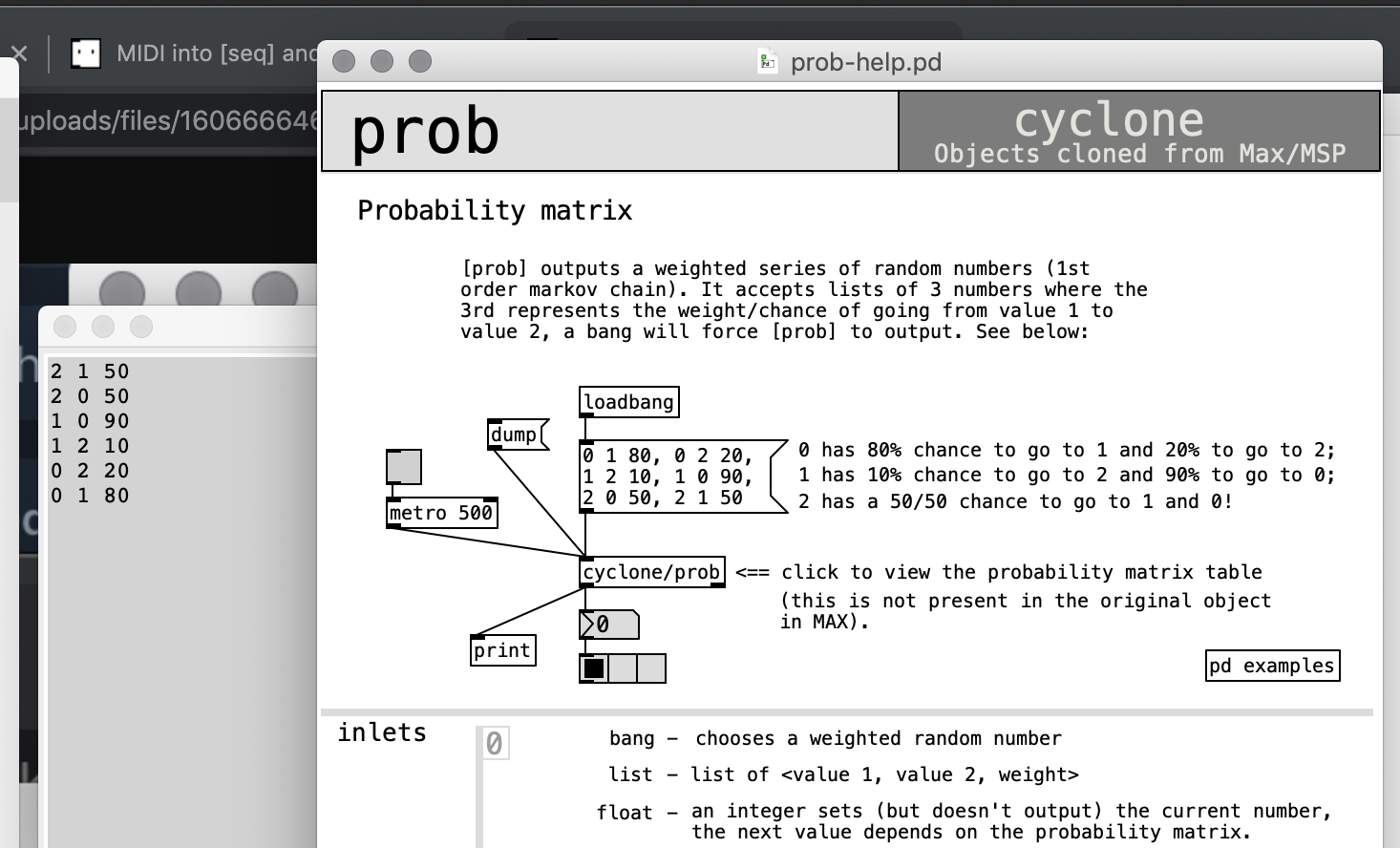

nice way to make multidimensional arraysanyway, I was working on an object based on cyclone/anal + cyclone/prob for ELSE, but I'm gonna try and make something like this now! I just miss the possibility to set a probability transition matrix like in prob, but I may be able to work on this patch and get there.

great work and thanks for this

-

@porres Well, the [markov] object takes source material and generates something like an implicit probability transition matrix from that. If you already have a probability matrix, you only need a starting point and can play the markov chains immediately. A much more simpler abstraction can do that. Mixing the two approaches seems complicated, since [markov] follows a different philosophy, i.e. it allows adding more source material later in the process.

Interesting could be to have two separate abstractions: One to generate a probability matrix from source material and another one that plays markov chains from that. So you can have both approaches and combine them.

This would also be similar to the combination of [anal] and [prob], but as a generalized approach to have markov chains of arbitrary length.

The question is rather if it is a realistic scenario to have a complex probability matrix for markov chains of higher order. [markov] is built as a basic machine learning tool.

-

I have been working with the Markov object with great success, but my patch fails in loading the saved state.

I get this when I reload my patchsavestate

... couldn't createUsing PD 0.48.1.

Any ideas on how to debug the savestate function?

-

@MikkelM since [savestate] is a newer object, i would try to use the current PD version (it exists since 0.49 http://msp.ucsd.edu/Pd_documentation/x5.htm).

-

Ok! I am using PD on a Tiny Core Linux platform that runs 0.48.1 and I dont think I dare to touch it again soonish.

-

@ingox I see now that the approach of defining a probability matrix is hard with this abstraction. I guess we needed to generate a third [text define] which properly encodes a probability matrix (of any order). This could be generated from the input like the $0-markov text, but once we're editing and creating it as the source of the chain, we'd need to create $0-memory and $0-markov from it...

so yeah, seems like a lot of trouble.

Anyway, hope you don't mind, I'm including a variation of this abstraction in my ELSE library

-

@porres Sure thing, it is public domain.

And yes, this abstraction is basically creating a form of probability matrix out of the source material. If you already have the matrix you don't need most of the steps and can basically directly play the chains...

This abstraction is something like a very basic machine learning approach: Play some notes or read a midi file into it and get new stuff out of it that is computer generated, but based on human creativity.

-

@ingox said:

If you already have the matrix you don't need most of the steps and can basically directly play the chains...

the thing is that I was talking about another form of matrix like the one from [prob] which is kinda intuitive, unlike the one we have here

-

@porres Maybe you can post some sample data of your matrix?

-

just check cyclone/prob please, that's it

-

@porres This uses [array random] to move through the chains:

In markov_matrix_demo.pd you can see that the probabilities actually do match up.

The first value of the prob matrix could also be an index of a larger chain, the second value could also be the index of a chord. This could be incorporated or left outside the object. Only the length of the chain cannot be recalculated from within the system.

This does not include any checks for duplicates or consistency, so [markov_matrix] should be reset first and then the matrix data should be correct.